Documentation Index

Fetch the complete documentation index at: https://openlayer.com/docs/llms.txt

Use this file to discover all available pages before exploring further.

If you are building AI systems with OpenAI Agents SDK and want to evaluate multi-agent conversations, handoffs, and function tools, you can use the SDKs to make Openlayer part of your workflow.

This integration guide shows how you can comprehensively trace and monitor your multi-agent systems.

If you are building AI systems with OpenAI Agents SDK and want to evaluate multi-agent conversations, handoffs, and function tools, you can use the SDKs to make Openlayer part of your workflow.

This integration guide shows how you can comprehensively trace and monitor your multi-agent systems.

Evaluating OpenAI Agents SDK Applications

You can set up Openlayer tests to evaluate your OpenAI Agents SDK applications in monitoring and development.

Monitoring

To use the monitoring mode, you must instrument your code to publish the requests your AI system receives to the Openlayer platform.

To set it up, you must follow the steps in the code snippet below:

# 1. Set the environment variables

import os

os.environ["OPENAI_API_KEY"] = "YOUR_OPENAI_API_KEY_HERE"

os.environ["OPENLAYER_API_KEY"] = "YOUR_OPENLAYER_API_KEY_HERE"

os.environ["OPENLAYER_INFERENCE_PIPELINE_ID"] = "YOUR_OPENLAYER_INFERENCE_PIPELINE_ID_HERE"

# 2. Import required modules from OpenAI Agents SDK

from agents import (

Agent,

Runner,

trace as agent_trace,

set_trace_processors,

function_tool,

)

# 3. Import and set up the Openlayer tracer processor

from openlayer.lib.integrations.openai_agents import OpenlayerTracerProcessor

set_trace_processors([

OpenlayerTracerProcessor(

service_name="your_agent_service",

version="1.0.0",

environment="production"

)

])

# 4. Create your agents with tools and handoffs

@function_tool

async def example_tool(query: str) -> str:

"""Example function tool that agents can use."""

return f"Processed: {query}"

agent = Agent(

name="Example Agent",

instructions="You are a helpful agent.",

tools=[example_tool],

)

# 5. Run conversations with automatic tracing

async def run_conversation(user_input: str):

with agent_trace("Agent Conversation"):

result = await Runner.run(agent, user_input)

return result

# From now on, all agent conversations, handoffs, and tool calls

# are automatically traced by Openlayer

result = await run_conversation("How are you doing?")

- Agent conversations and message exchanges

- Function tool calls and their outputs

- Agent handoffs between different specialized agents

- Context sharing across agent interactions

- Metadata such as latency, token usage, and cost estimates

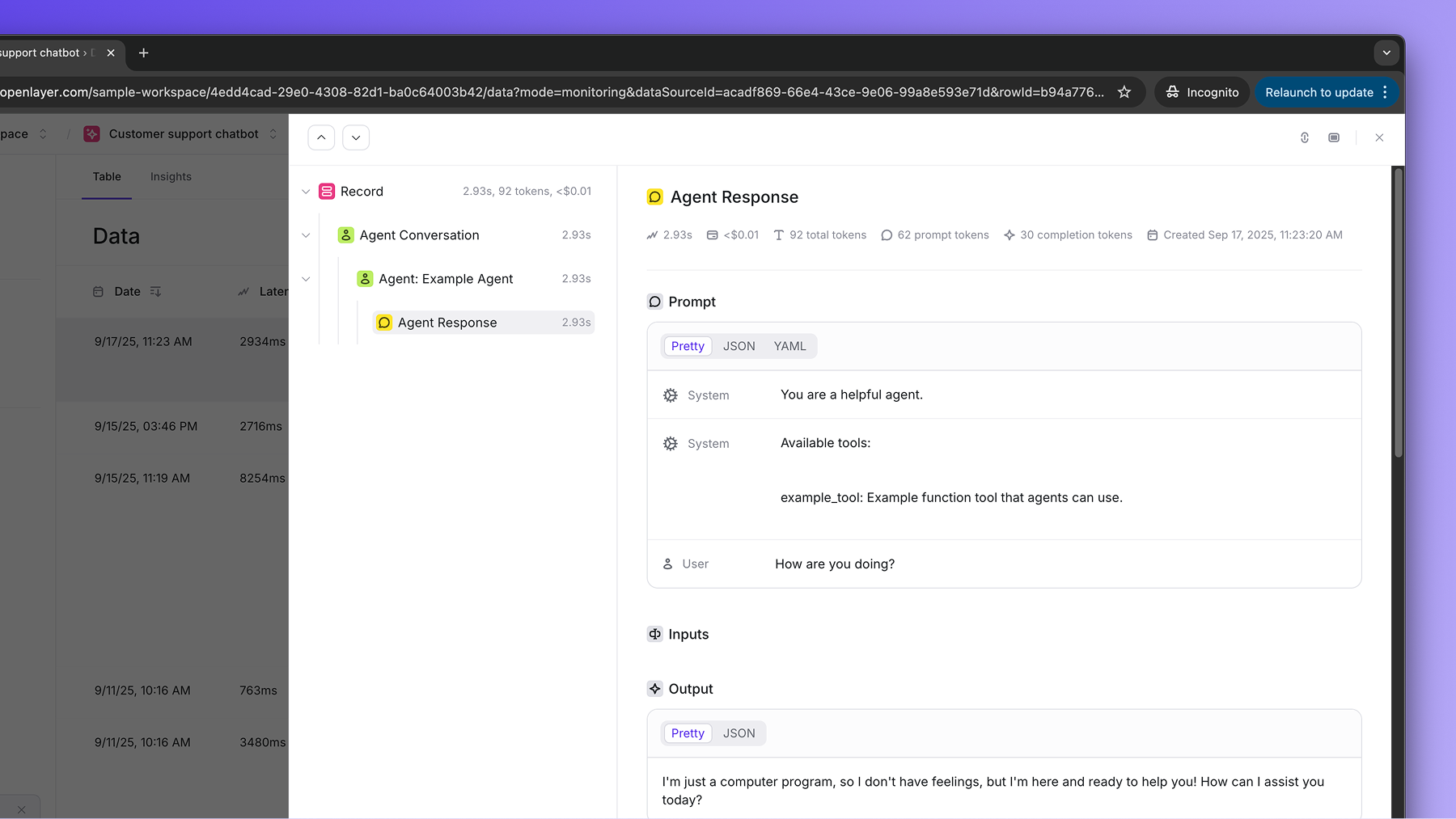

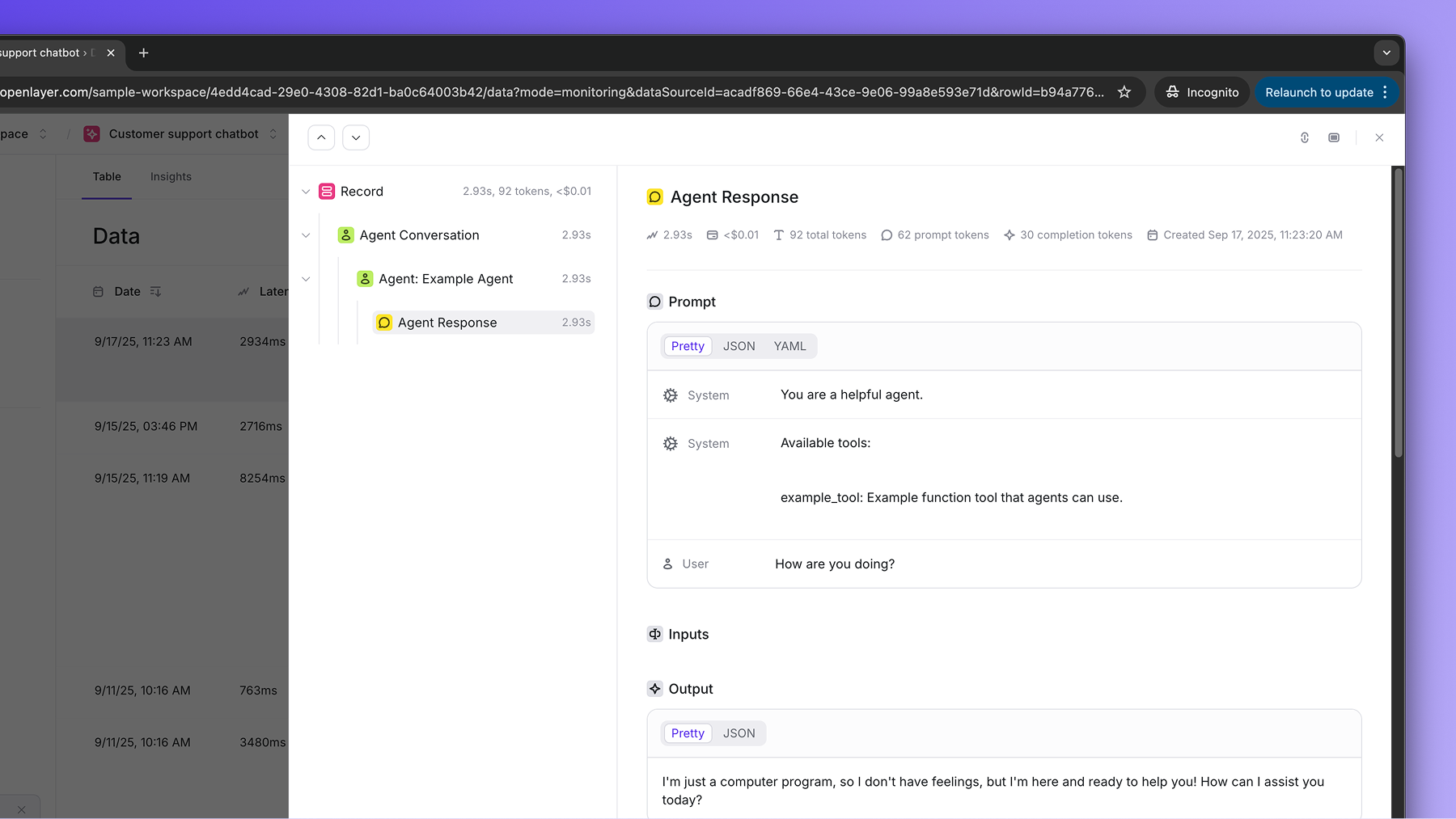

If you navigate to the “Data” page of your Openlayer data source, you can see the complete traces for each multi-agent conversation.

The OpenAI Agents SDK integration automatically captures the full conversation

flow, including agent handoffs and tool usage. You can use this together with

tracing to monitor complex multi-agent systems as part

of larger AI workflows. Development

In development mode, Openlayer becomes a step in your CI/CD pipeline, and your tests get automatically evaluated after being triggered by some events.

Openlayer tests often rely on your AI system’s outputs on a validation dataset. As discussed in the Configuring output generation guide, you have two options:

- either provide a way for Openlayer to run your AI system on your datasets, or

- before pushing, generate the model outputs yourself and push them alongside your artifacts.

For AI systems built with OpenAI Agents SDK, if you are not computing your system’s outputs yourself, you must provide your API credentials.

To do so, navigate to “Workspace settings” -> “Environment variables,” and click on “Add secret” to add your OPENAI_API_KEY.

If you don’t add the required OpenAI API key, you’ll encounter a “Missing API key” error when Openlayer tries to run your AI system to get its outputs.