Documentation Index

Fetch the complete documentation index at: https://openlayer.com/docs/llms.txt

Use this file to discover all available pages before exploring further.

Openlayer integrates with LangGraph via Langchain Callbacks. Therfore,

Openlayer automatically traces every run of your

LangGraph applications.

This allows you to set up tests, log, and analyze your LangGraph

application with minimal integration efforts.

Openlayer integrates with LangGraph via Langchain Callbacks. Therfore,

Openlayer automatically traces every run of your

LangGraph applications.

This allows you to set up tests, log, and analyze your LangGraph

application with minimal integration efforts.

Evaluating LangGraph applications

You can set up Openlayer tests to evaluate your LangGraph applications

in monitoring and development.

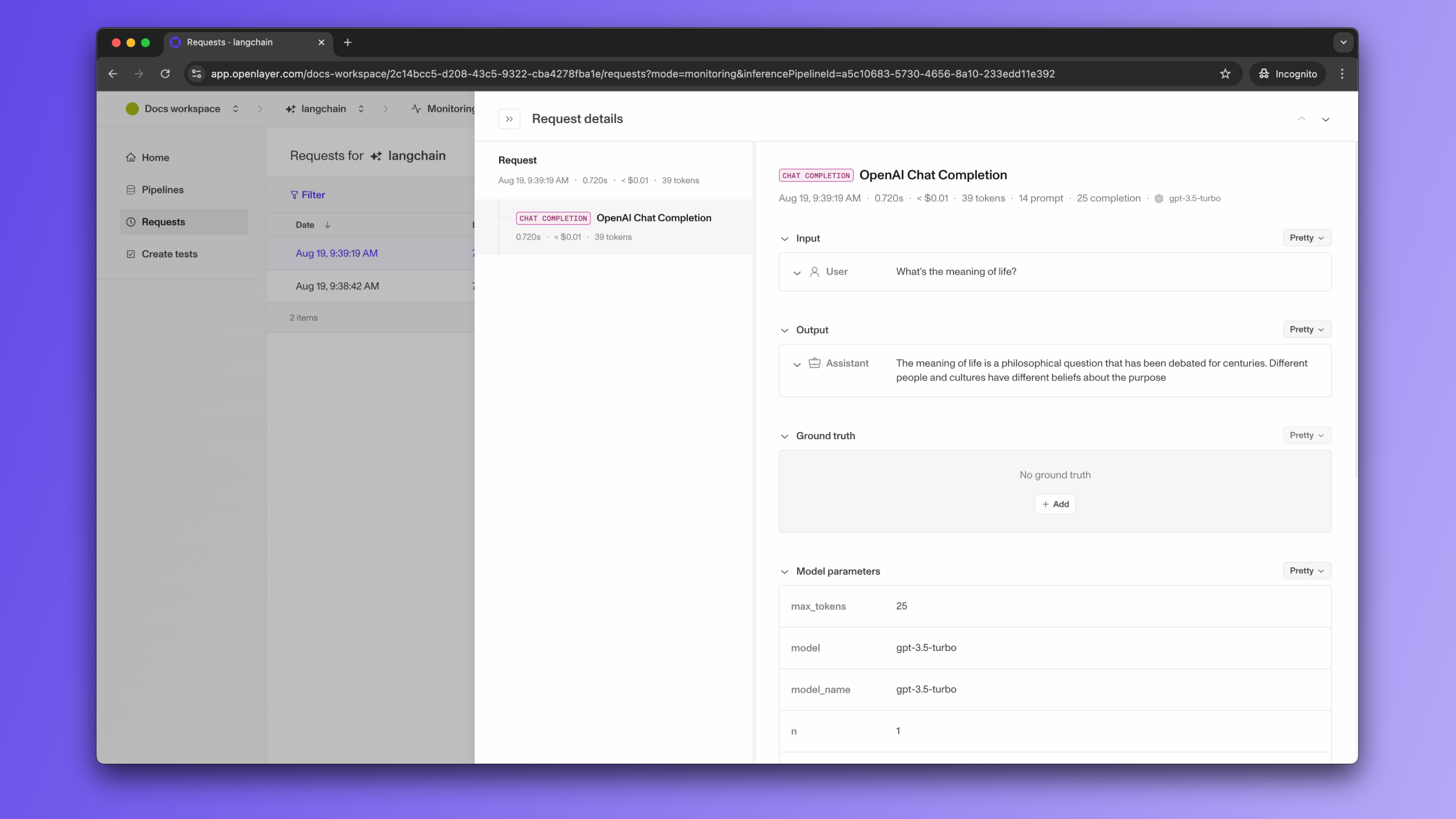

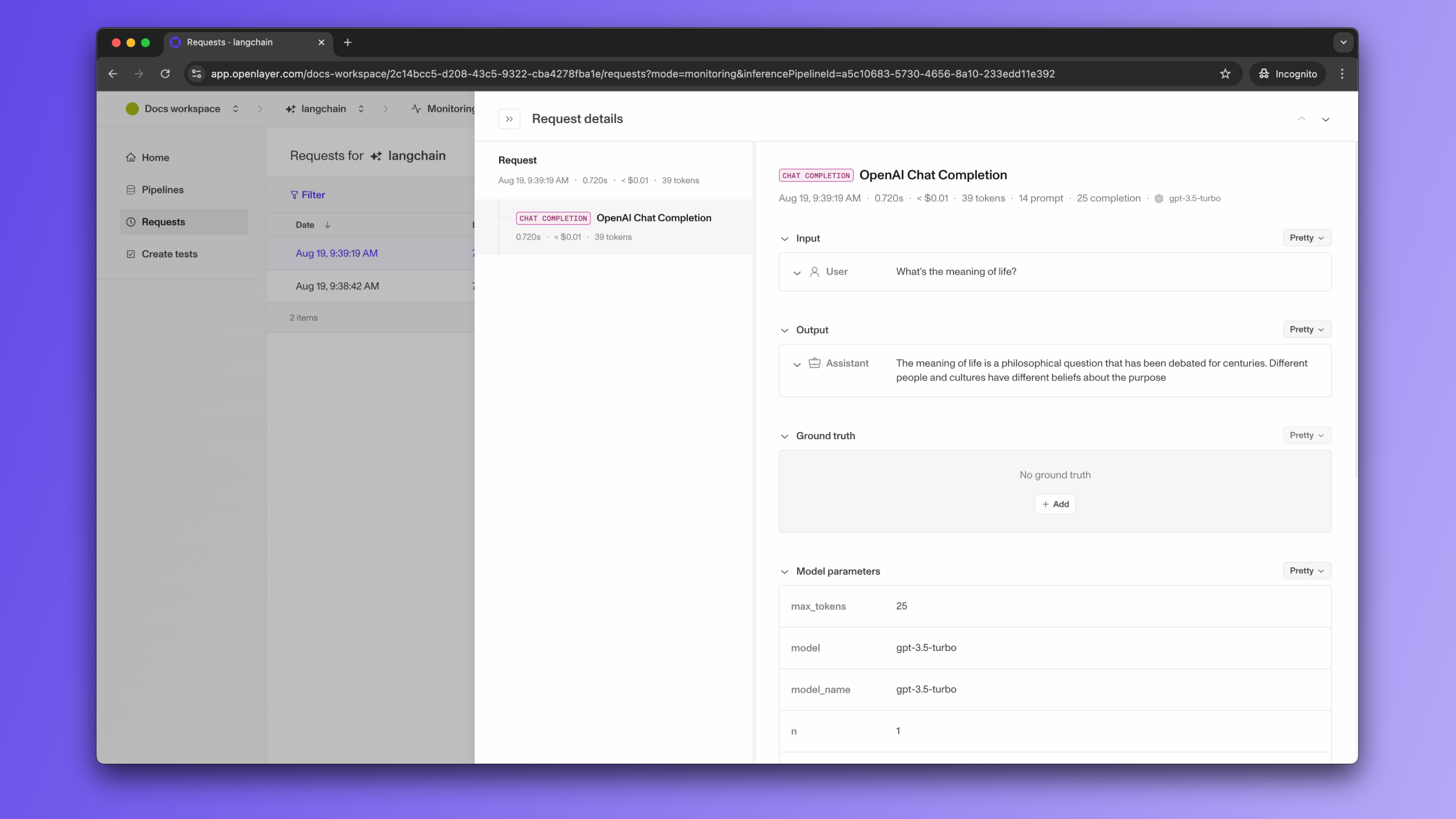

Monitoring

To use the monitoring mode, you must instrument your code to publish

the requests your AI system receives to the Openlayer platform.

To set it up, you must follow the steps in the code snippet below:

# 1. Set the environment variables

import os

os.environ["OPENAI_API_KEY"] = "YOUR_OPENAI_API_KEY_HERE"

os.environ["OPENLAYER_API_KEY"] = "YOUR_OPENLAYER_API_KEY_HERE"

os.environ["OPENLAYER_INFERENCE_PIPELINE_ID"] = "YOUR_OPENLAYER_INFERENCE_PIPELINE_ID_HERE"

# 2. Instantiate the `OpenlayerHandler`

from openlayer.lib.integrations import langchain_callback

openlayer_handler = langchain_callback.OpenlayerHandler()

# 3. Use LangGraph's `stream` method to pass the handler to your LLM/chain invocations

from typing import Annotated

from typing_extensions import TypedDict

from langgraph.graph import StateGraph

from langchain_openai import ChatOpenAI

from langchain_core.messages import HumanMessage

from langgraph.graph.message import add_messages

class State(TypedDict):

# Messages have the type "list". The `add_messages` function in the annotation defines how this state key should be updated

# (in this case, it appends messages to the list, rather than overwriting them)

messages: Annotated[list, add_messages]

graph_builder = StateGraph(State)

llm = ChatOpenAI(model = "gpt-4o", temperature = 0.2)

# The chatbot node function takes the current State as input and returns an updated messages list. This is the basic pattern for all LangGraph node functions.

def chatbot(state: State):

return {"messages": [llm.invoke(state["messages"])]}

# Add a "chatbot" node. Nodes represent units of work. They are typically regular python functions.

graph_builder.add_node("chatbot", chatbot)

# Add an entry point. This tells our graph where to start its work each time we run it.

graph_builder.set_entry_point("chatbot")

# Set a finish point. This instructs the graph "any time this node is run, you can exit."

graph_builder.set_finish_point("chatbot")

# To be able to run our graph, call "compile()" on the graph builder. This creates a "CompiledGraph" we can use invoke on our state.

graph = graph_builder.compile()

# Pass the openlayer_handler as a callback to the LangGraph graph. After running the graph,

# you'll be able to see the traces in the Openlayer platform.

for s in graph.stream({"messages": [HumanMessage(content = "What is the meaning of life?")]},

config={"callbacks": [openlayer_handler]}):

print(s)

The code snippet above uses builds a simple chatbot. However, the Openlayer

Callback Handler also works for more complex LangGraph applications, including

multi-agent workflows. Refer to the final section of the notebook

example

for a tracing example for multi-agent workflows.

If the LangGraph graph invocation is just one of the steps of your AI system,

you can use the code snippets above together with

tracing. In this case, your graph invocations get added

as steps of a larger trace. Refer to the Tracing guide

for details. Development

In development mode, Openlayer becomes a step in

your CI/CD pipeline, and your tests get automatically evaluated after being triggered

by some events.

Openlayer tests often rely on your AI system’s outputs on a validation

dataset. As discussed in the

Configuring output generation guide,

you have two options:

- either provide a way for Openlayer to run your AI system on your datasets, or

- before pushing, generate the model outputs yourself and push them alongside your

artifacts.

For LangGraph applications, if you are not computing

your system’s outputs yourself, you must provide the required API credentials.

For example, if you application uses LangChain’s ChatOpenAI,

you provide an OPENAI_API_KEY, if it uses ChatMistralAI,

you must provide a MISTRAL_API_KEY,

and so on.

To provide the required API credentials, navigate to “Workspace settings” -> “Environment variables,”

and add the credentials as secrets.

If fail to add the required credentials, you’ll likely encounter a “Missing API key”

error when Openlayer tries to run your AI system to get its outputs.