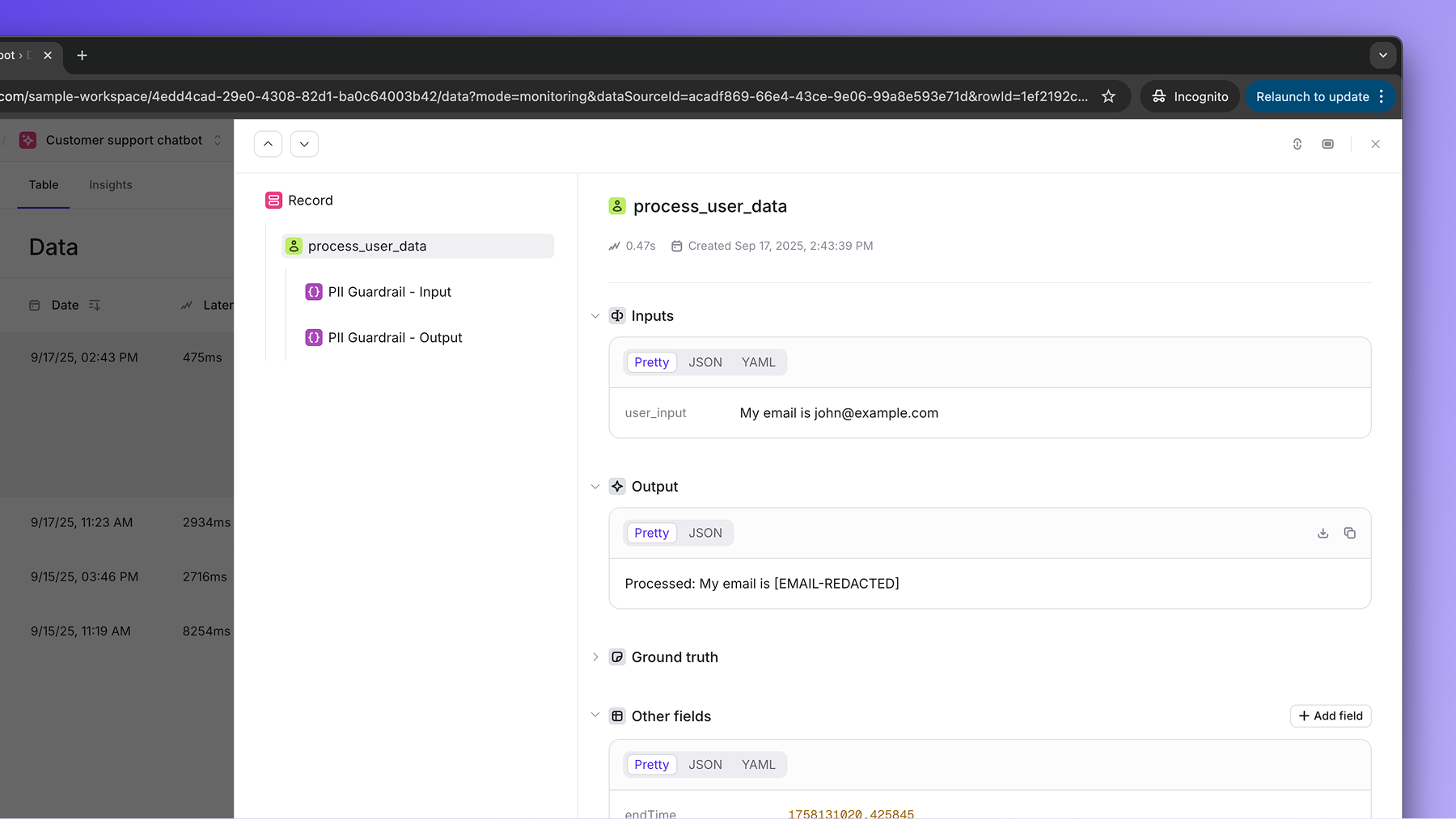

Guardrails are runtime checks that help you enforce constraints on your AI system’s inputs and outputs.Documentation Index

Fetch the complete documentation index at: https://openlayer.com/docs/llms.txt

Use this file to discover all available pages before exploring further.

Guardrails vs. Tests

Guardrails complement tests, in particular in monitoring mode. While your Openlayer tests run continuously on top of your live data and trigger a notification in case of failure, guardrails validate inputs and outputs in real time and block or modify them if they don’t meet your constraints. Together, they give you both proactive coverage (through tests) and reactive protection (through guardrails).Guardrails library

Openlayer has a Python library for guardrails. You can use one of the built-in guardrails (such as the PII or prompt injection), or implement custom guardrails following the interface defined in theBaseGuardrail class.

You can install it with:

| Guardrail | Extra | Install command |

|---|---|---|

PIIGuardrail | pii | pip install openlayer-guardrails[pii] |

PromptInjectionGuardrail | prompt-injection | pip install openlayer-guardrails[prompt-injection] |

With Openlayer tracing

Guardrails work well with Openlayer tracing. In this case, you can pass the desired guardrails to thetrace decorator, and they will be applied

to the inputs and outputs of the traced function.

Prerequisites: Besides the

openlayer-guardrails, you need to have the

openlayer library installed and have tracing correctly configured in your

project to run the example below.